Women Take On The Digital Divide

a multi-part public event series

"Women Take on the Digital Divide" – The multi-part public event series includes discussions on questions such as: How will the digital revolution affect the work of women in particular? How can concepts such as blockchain or crypto currencies be used emancipatively by women? How do algorithms already influence women's access to services, jobs or insurance?

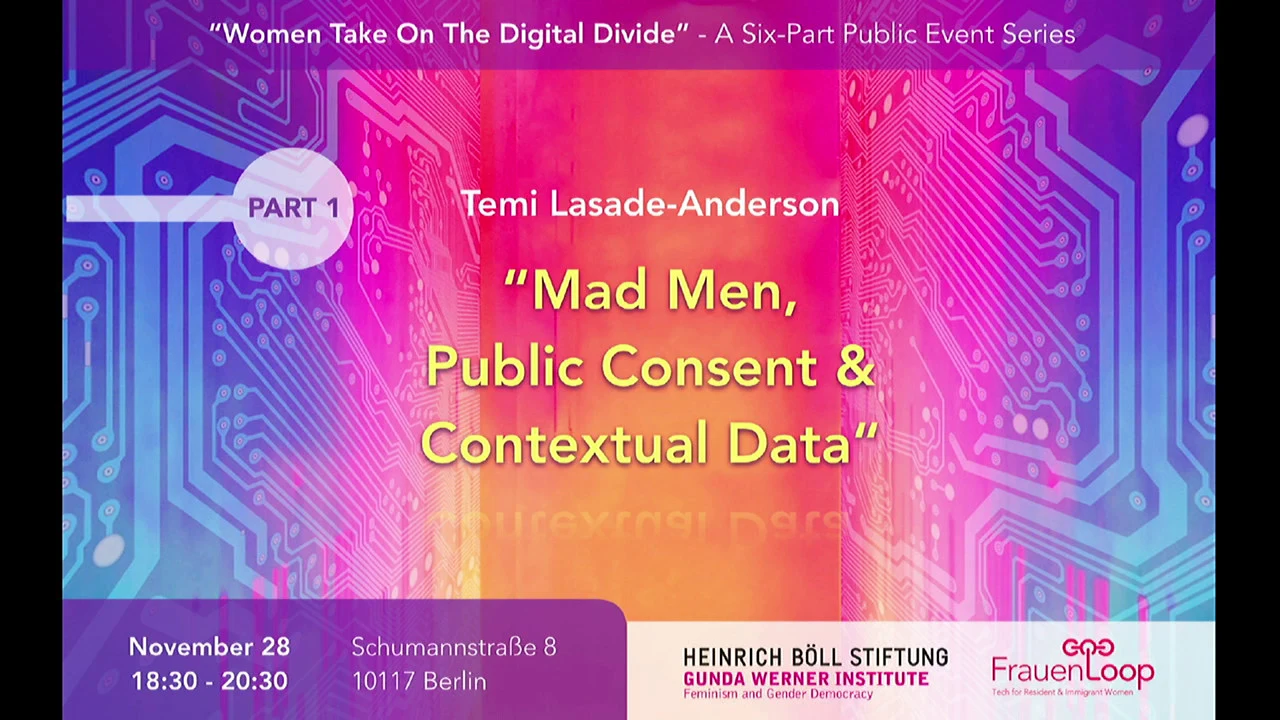

Part 1: "Mad Men”: Public Consent & Data Privacy

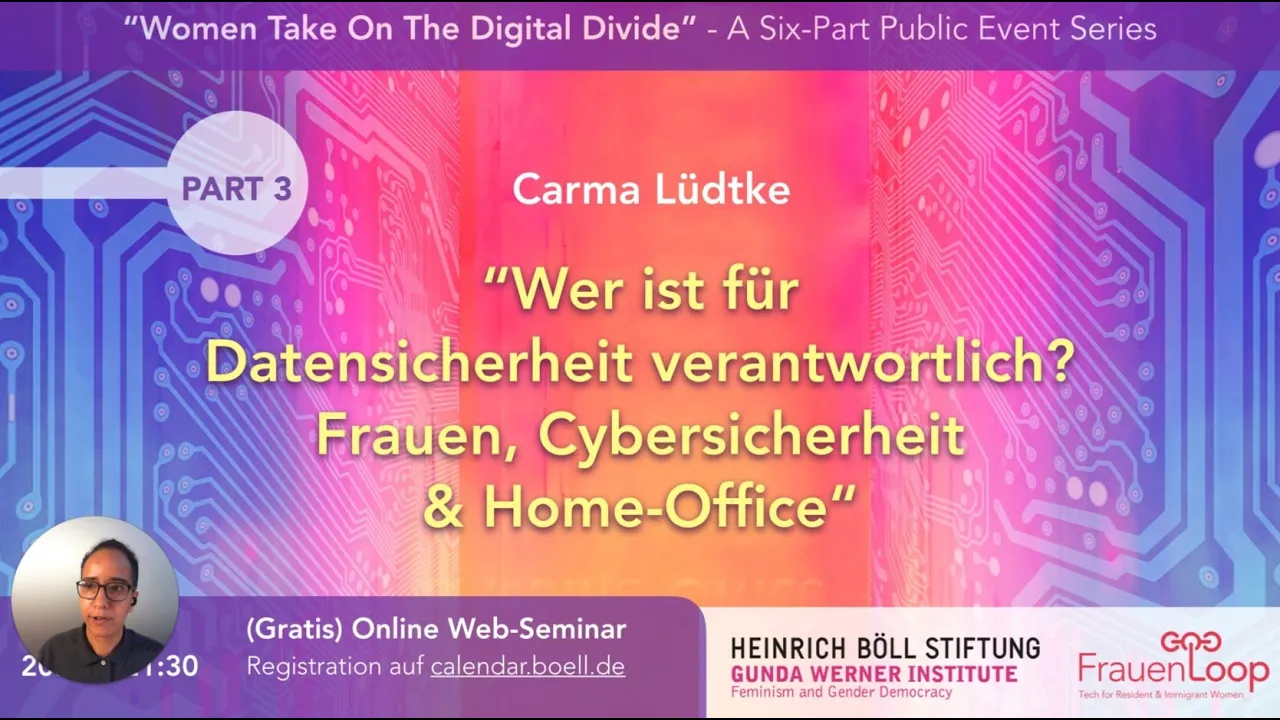

Part 2: Wer ist für Datensicherheit verantwortlich? Frauen, Cybersicherheit & Home Office

Wer ist für Datensicherheit verantwortlich? Frauen, Cybersicherheit & Home Office - Heinrich-Böll-Stiftung

Watch on YouTube

Watch on YouTube

with:

Carma Lüdtke, german cyber safety expert, CEO of Lacewing Tech, formerly worked at the Federal Office for Information Security (BSI)

Dr. Nakeema Stefflbauer, FrauenLoop

Francesca Schmidt, Gunda Werner Institute

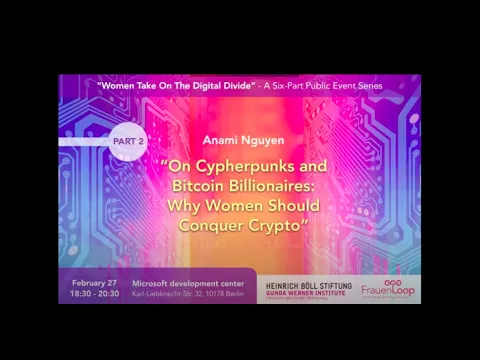

Part 3: On Cypherpunks and Bitcoin Billionaires: Why Women Should Conquer Crypto

On Cypherpunks and Bitcoin Billionaires: Why Women Should Conquer Crypto - Heinrich-Böll-Stiftung

Watch on YouTube

Watch on YouTube

with:

Anami Nguyen, NEUFUND

Bitcoin was launched as an antidote to the concentration of power and wealth on Wall Street. However, what started as a vision of “power to the people,” has become an industry that largely replicates the biases and exclusivity of the past.

What is the difference between elite investments and a currency market invented to encourage democratic, decentralized funds for all? The Gunda Werner Institute in the Heinrich Böll Foundation, in cooperation with FrauenLoop, invites you to hear from a finance professional with a unique perspective on elite investments, and why it is crucial for everyone - especially women - to understand and participate in the world of crypto.

Anami Nguyen received a BSc in Finance & Management from NYU Stern. She worked as an analyst for J.P. Morgan. After that she helped build the finance operations and asset management practices for the Web3 Foundation. She is currently a Business Development Manager at NEUFUND, a blockchain supported financing and investment platform.

Part 4: Computer Vision: Who Benefits and Who Is Harmed?

with:

Dr. Timnit Gebru, computer scientist and the technical co-lead of the Ethical Artificial Intelligence Team at Google, Co-Author Gender Shades

Dr. Nakeema Stefflbauer, FrauenLoop

Francesca Schmidt, Gunda Werner Institute

Computer vision has ceased to be a purely academic endeavor. From law enforcement, to border control, to employment, healthcare diagnostics, and assigning trust scores, computer vision systems are being rapidly integrated into all aspects of society.

Gender Shades showed that commercial gender classification systems have high disparities in error rates by skin-type and gender, and other works discuss the harms caused by the mere existence of automatic gender recognition systems. Recent papers have also exposed shockingly racist and sexist labels in popular computer vision datasets--resulting in the removal of some.

However, today, in research, there are works that purport to determine a person’s sexuality from their social network profile images, and others that claim to classify “violent individuals” from drone footage. A critical public discourse surrounding the use of computer-vision based technologies has been mounting. The use of facial recognition technologies by policing agencies has been heavily critiqued and, in response, companies such as Microsoft, Amazon, and IBM have pulled or paused their facial recognition software services.

In this talk, Dr. Timnit Gebru highlights some of the issues and proposed solutions to mitigate bias. She aso shows, how some of the proposed fixes could exacerbate the problem rather than mitigate it.